mirror of

https://github.com/huggingface/text-generation-inference.git

synced 2025-09-10 20:04:52 +00:00

Small rearrangement in index page

This commit is contained in:

parent

862db1be0c

commit

ec592d550e

@ -1,8 +1,8 @@

|

||||

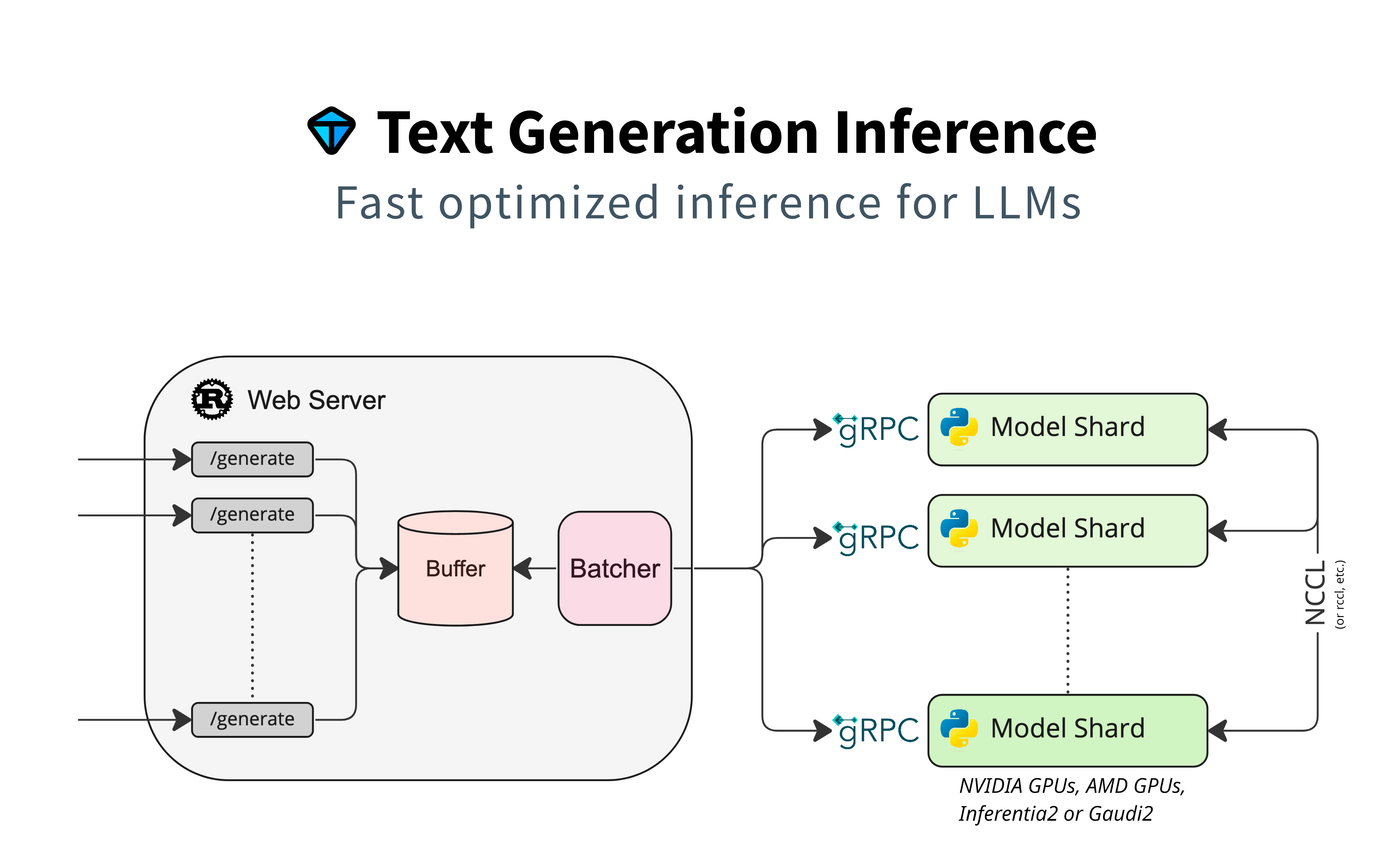

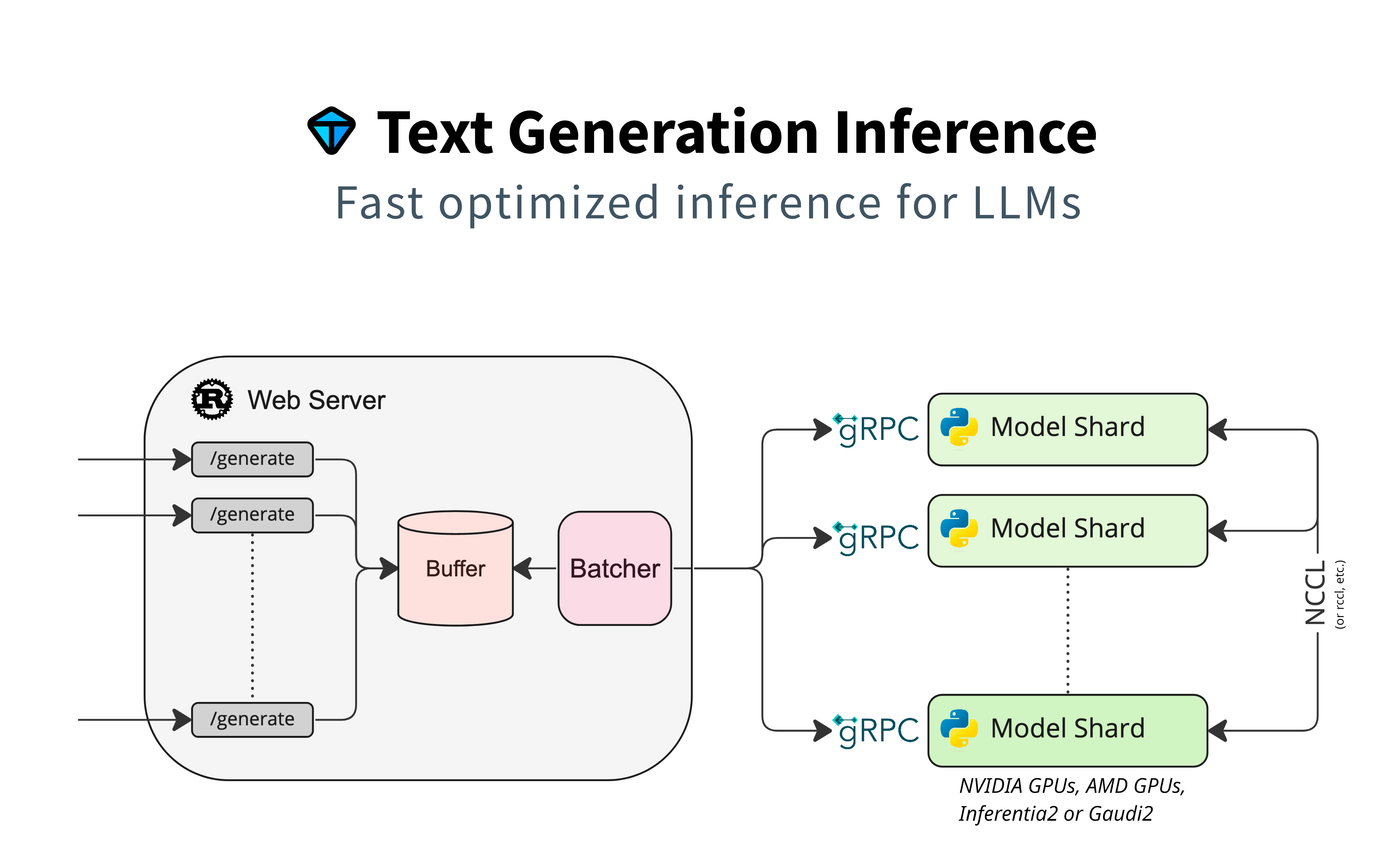

# Text Generation Inference

|

||||

|

||||

|

||||

Text Generation Inference (TGI) is a toolkit for deploying and serving Large Language Models (LLMs). TGI enables high-performance text generation for the most popular open-source LLMs, including Llama, Falcon, StarCoder, BLOOM, GPT-NeoX, and T5.

|

||||

|

||||

Text Generation Inference (TGI) is built for deploying and serving Large Language Models (LLMs). TGI enables high-performance text generation for the most popular open-source LLMs, including Llama, Falcon, StarCoder, BLOOM, GPT-NeoX, and T5.

|

||||

|

||||

|

||||

Text Generation Inference implements many optimizations and features, such as:

|

||||

|

||||

@ -11,7 +11,7 @@ Text Generation Inference implements many optimizations and features, such as:

|

||||

- Tensor Parallelism for faster inference on multiple GPUs

|

||||

- Token streaming using Server-Sent Events (SSE)

|

||||

- Continuous batching of incoming requests for increased total throughput

|

||||

- Optimized transformers code for inference using [flash-attention](https://github.com/HazyResearch/flash-attention) and [Paged Attention](https://github.com/vllm-project/vllm) on the most popular architectures

|

||||

- Optimized transformers code for inference using [Flash Attention](https://github.com/HazyResearch/flash-attention) and [Paged Attention](https://github.com/vllm-project/vllm) on the most popular architectures

|

||||

- Quantization with [bitsandbytes](https://github.com/TimDettmers/bitsandbytes) and [GPT-Q](https://arxiv.org/abs/2210.17323)

|

||||

- [Safetensors](https://github.com/huggingface/safetensors) weight loading

|

||||

- Watermarking with [A Watermark for Large Language Models](https://arxiv.org/abs/2301.10226)

|

||||

@ -19,7 +19,6 @@ Text Generation Inference implements many optimizations and features, such as:

|

||||

- Stop sequences

|

||||

- Log probabilities

|

||||

|

||||

TODO: Add something more visual here

|

||||

|

||||

Text Generation Inference is used in production by multiple projects, such as:

|

||||

|

||||

|

||||

Loading…

Reference in New Issue

Block a user