+

+

+

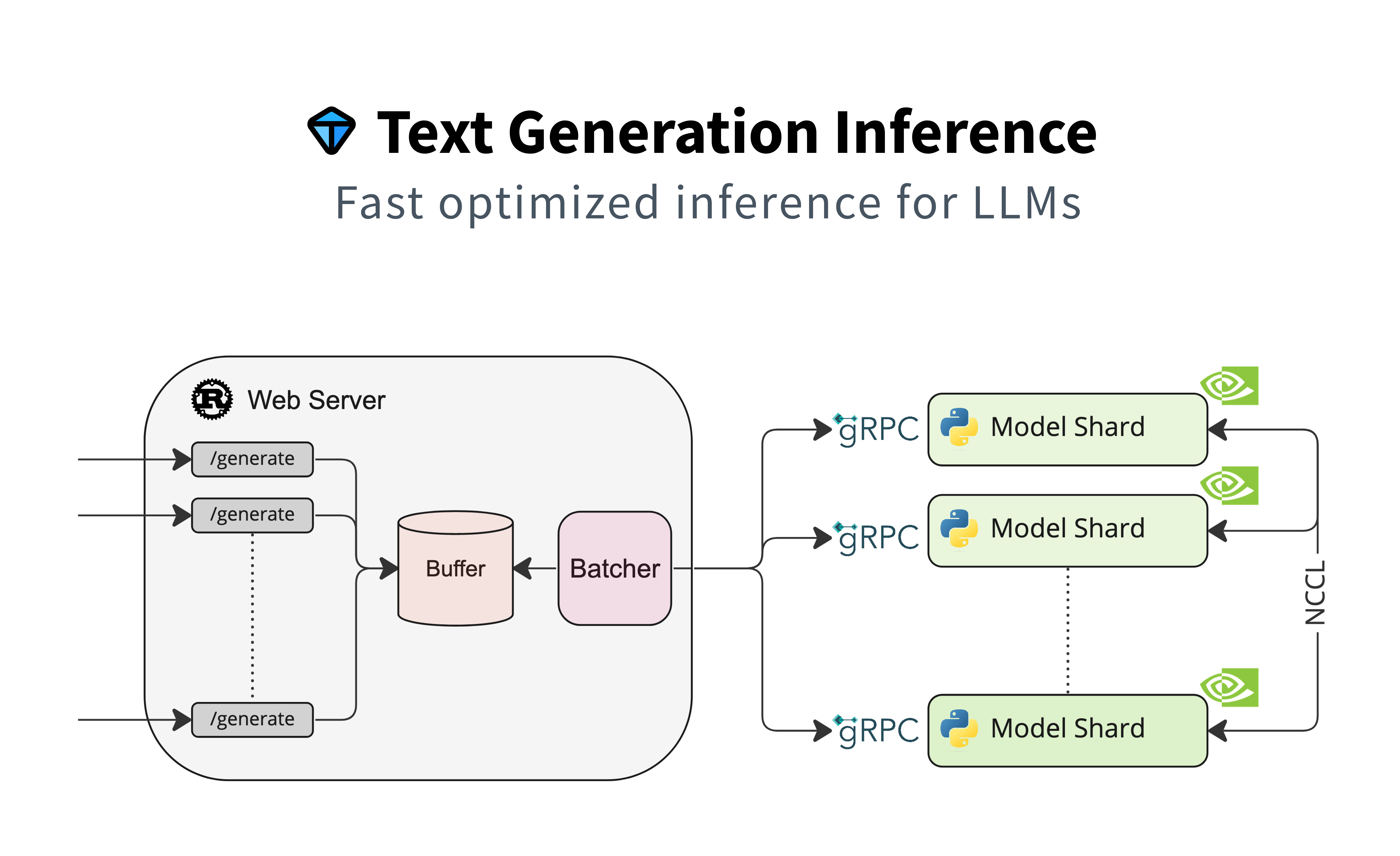

+# Text Generation Inference

+

+

+  +

+

+

+  +

+

+

+  +

+

+

+

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+ +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+