mirror of

https://github.com/huggingface/text-generation-inference.git

synced 2025-09-12 04:44:52 +00:00

Add links to Adyen blogpost

This commit is contained in:

parent

a3c9c62dc0

commit

7dee5e359e

@ -189,7 +189,7 @@ overridden with the `--otlp-service-name` argument

|

||||

|

||||

|

||||

|

||||

Detailed blogpost by Adyen on TGI inner workings: [LLM inference at scale with TGI](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

Detailed blogpost by Adyen on TGI inner workings: [LLM inference at scale with TGI (Martin Iglesias Goyanes - Adyen, 2024)](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

|

||||

### Local install

|

||||

|

||||

|

||||

4

docs/source/conceptual/external.md

Normal file

4

docs/source/conceptual/external.md

Normal file

@ -0,0 +1,4 @@

|

||||

# External sources

|

||||

|

||||

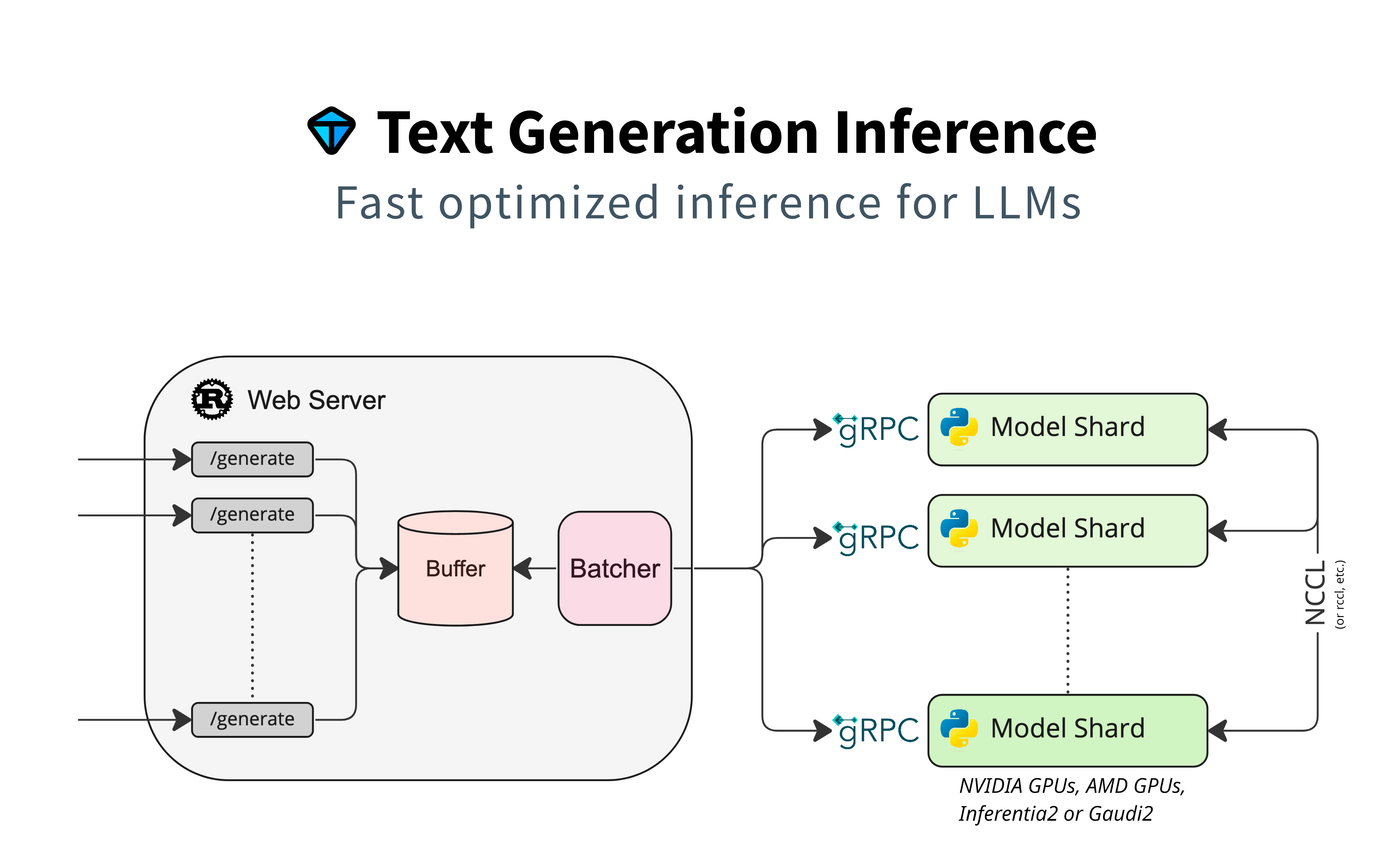

- Adyen wrote a detailed article about the interplay between TGI's main components: router and server.

|

||||

[LLM inference at scale with TGI (Martin Iglesias Goyanes - Adyen, 2024)](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

@ -155,7 +155,3 @@ SSEs are different than:

|

||||

* Webhooks: where there is a bi-directional connection. The server can send information to the client, but the client can also send data to the server after the first request. Webhooks are more complex to operate as they don’t only use HTTP.

|

||||

|

||||

If there are too many requests at the same time, TGI returns an HTTP Error with an `overloaded` error type (`huggingface_hub` returns `OverloadedError`). This allows the client to manage the overloaded server (e.g., it could display a busy error to the user or retry with a new request). To configure the maximum number of concurrent requests, you can specify `--max_concurrent_requests`, allowing clients to handle backpressure.

|

||||

|

||||

## External sources

|

||||

|

||||

Adyen wrote a nice recap of how TGI streaming feature works. [LLM inference at scale with TGI](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

|

||||

Loading…

Reference in New Issue

Block a user