-

-

-

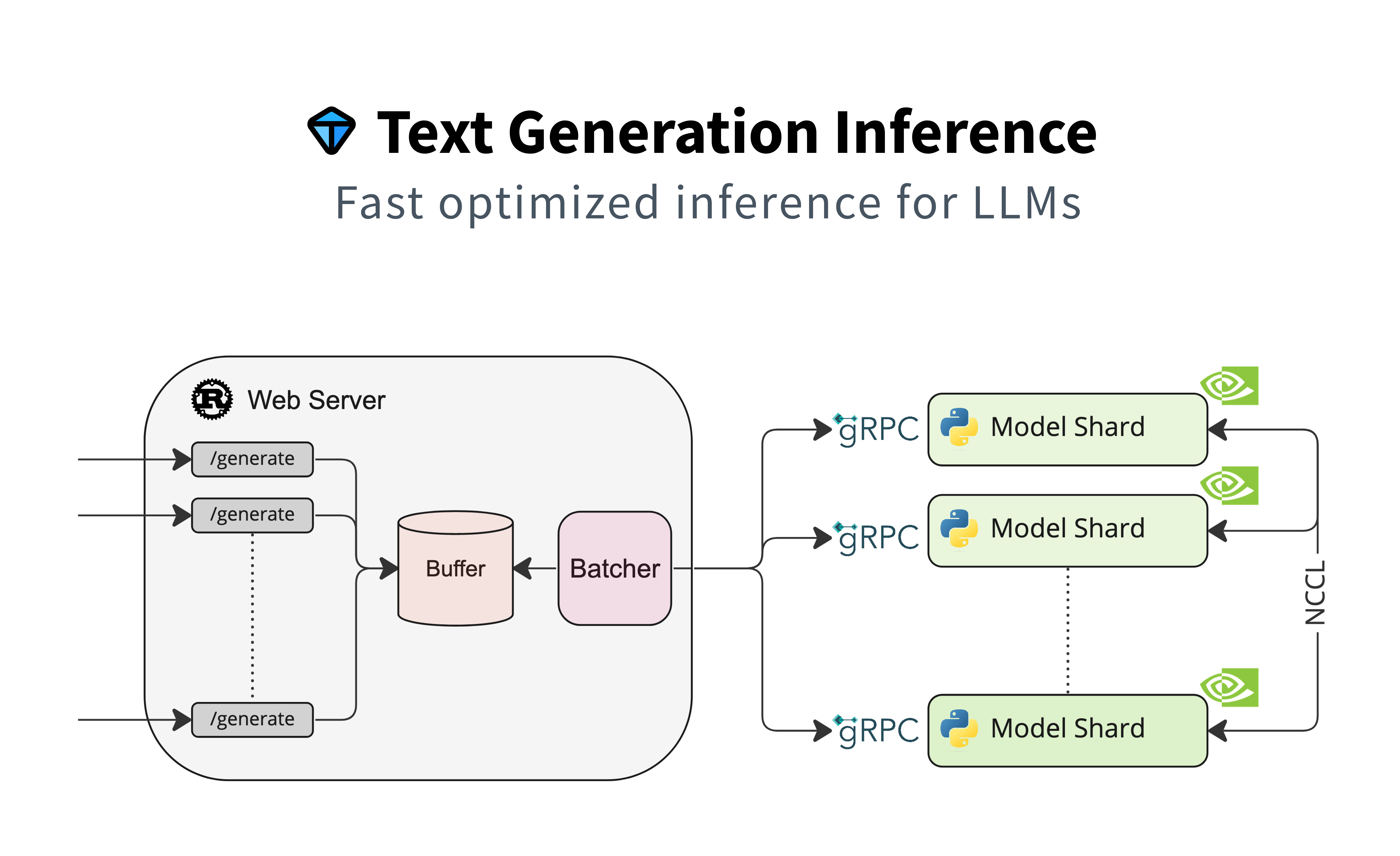

-# Text Generation Inference

-

-

-  -

-

-

-  -

-

-

-  -

-

-

-

-

-

-

-

-  -

-

-

-

-

-  -

-

-

- -

-

-

-

-

-  -

-

-

-

-

-  -

-

-

-